Documentation Index

Fetch the complete documentation index at: https://blog.elevate.do/llms.txt

Use this file to discover all available pages before exploring further.

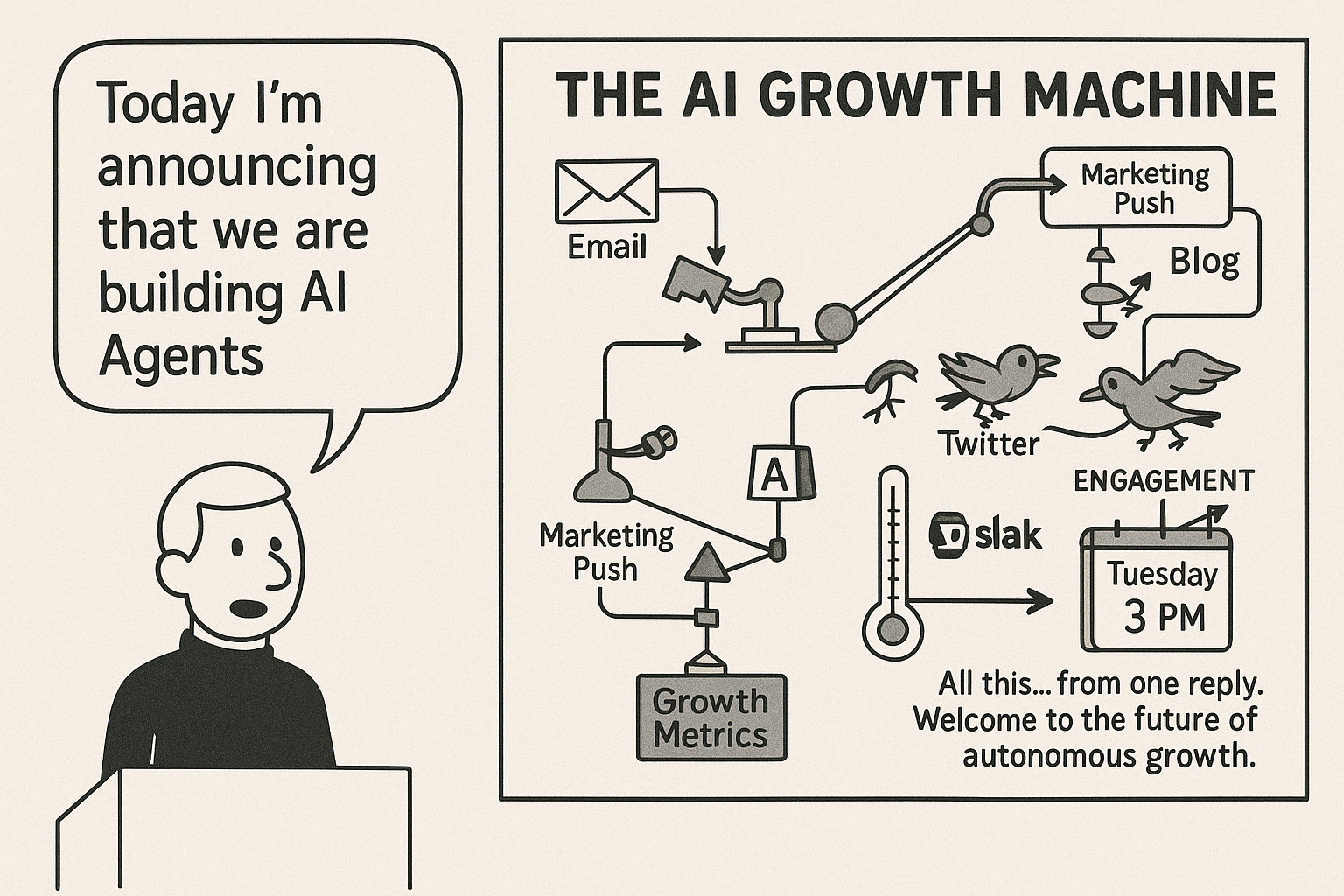

Image generated using GPT 4o on May 12th, 2025.You folks know what a Rube Goldberg machine is, right? As I implement at-scale complex multi-step workflows for my clients, I’m reminded of how the non-deterministic nature of LLMs compounds with multiple downstream steps. Combine that with standard engineering realities—rate limits, flaky connections, retries, timeouts—and it quickly becomes more than a toy problem. It becomes real engineering. Take one of my current workflows: it takes 15 minutes to complete. That’s not idle time—it’s churning through PDF parsing, database reconciliation, structured-to-unstructured transformations, all of which carry their own failure probabilities. At one point, I parallelized tasks using

ThreadPoolExecutor, but rolled it back after too many hard-to-debug errors. Turns out, a pesky 503 from Bedrock was derailing things mid-run.

At this scale, you’re not just prototyping—you’re building distributed, fault-tolerant systems. AI workflows need the same care we give to production infra.

So, yeah… the comic above? It’s a little funny, but also a little too real.

All this… from one reply. Welcome to the future of autonomous growth.

🛠️ Curious how to make your AI agents production-ready? Drop me a note.

Share on LinkedIn

Share this article with your network

Share on X

Tweet this article to your followers

Schedule a Chat

Let’s discuss AI and potential opportunities

About Elevate.do

Learn more about our work in AI infrastructure and workplace transformation

Follow on LinkedIn

Connect with me for more insights on AI

Follow on X

Follow for real-time thoughts on AI agents and infrastructure